Ensenso 3D operation

How does AI laser dot triangulation work?

See in three dimensions with structured light

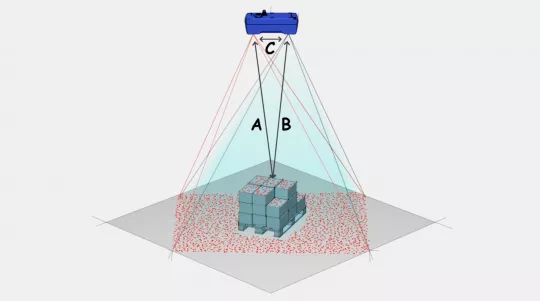

Ensenso S-series cameras work with structured light. An infrared (IR) laser projects a random pattern of dots into the object space, which are recorded by a camera from a slightly different position. However, the dot pattern image varies at points where objects reflect the light. The corresponding laser image dots deviate from their expected position depending on how far an object is from the light source. The deviations of the dot positions form the basis of the depth information.

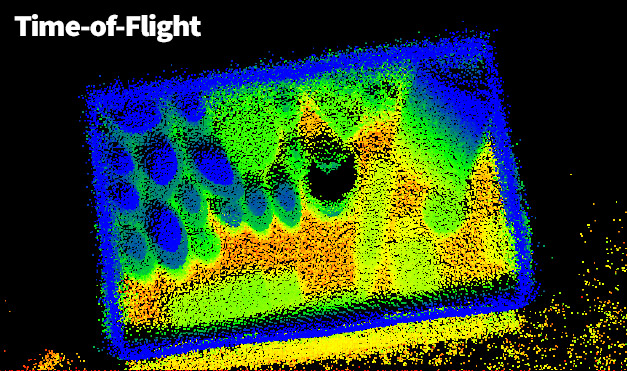

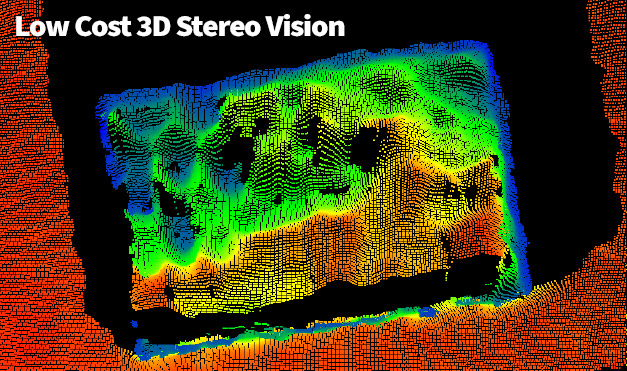

Unlike the 3D methods LIDAR or Time of Flight (ToF), which are also based on laser light, the spatial depth of each captured projection point and thus of the point cloud does not result from measurements of the light delay, but from triangulation, as used in the Ensenso Stereo Vision cameras.

The calculation is based on a deviation of the laser point position (= "disparity"), which results from two viewing angles, as in Stereo Vision. However, 3D methods as in the Ensenso S only record the object space with one camera. How is it possible to extract positional differences of points from one single camera image of the projected pattern?

The projector itself provides the necessary information. The so-called DOE projection (diffractive optics) generates a fixed "point image" whose point positions are thus known. With the knowledge of the distance and viewing angle of the two point images, the Ensenso software triangulation procedure can determine the 3D coordinates of each (visible) laser point.

Structured light

The IR laser projects a fixed pattern of light dots into the object space. So-called diffractive optics (DOE) are used to create the dot pattern. Its fine microstructures ensure a targeted splitting and diffraction of the laser light so that the desired light distribution is created.

The DOE enables a homogeneous intensity distribution of the light and also ensures an almost loss-free emission of the beam energy. In combination with the narrow-band laser light, a dot pattern with very high contrast can thus be created even in low ambient lighting.

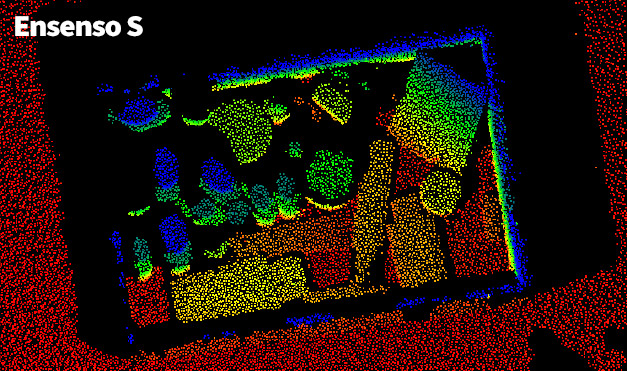

AI-accelerated point matching

To calculate depth information using triangulation, for each projection point the corresponding image point must first be determined. However, identifying one point among many is no trivial challenge for rule-based image processing algorithms when the expected point positions are shifted by light reflections on objects. The solution is called "artificial intelligence".

Which technology is more suitable for recognising and classifying features with countless variations? The Ensenso software therefore uses a KNN (artificial neural network) for point identification in the camera image, which was pre-trained with tilts and distortions of the used pattern.

Advantages of the AI laser point triangulation

High depth accuracy

Robust and geometrically precise 3D data with high depth accuracy due to high success rate of ANN point matching

Low light

Works in low ambient lighting conditions due to IR illumination

Fast capture

Fast image acquisition and evaluation, as only one image pair is processed

AI acceleration

Up to 20 point clouds per second due to ANN acceleration

No motion blur

Ideal for moving objects without motion blur due to short exposure time and high laser emission

(*) Ensenso S10 generates geometrically precise 3D data with high depth accuracy compared to 3D cameras with Time OF Flight (TOF) or low cost 3D stereo vision technology.

Ensenso Selector

You can now use the Ensenso camera selector to help you choose components. Simply enter your parameters in the online configuration tool and it will list the best possible combinations for your application.